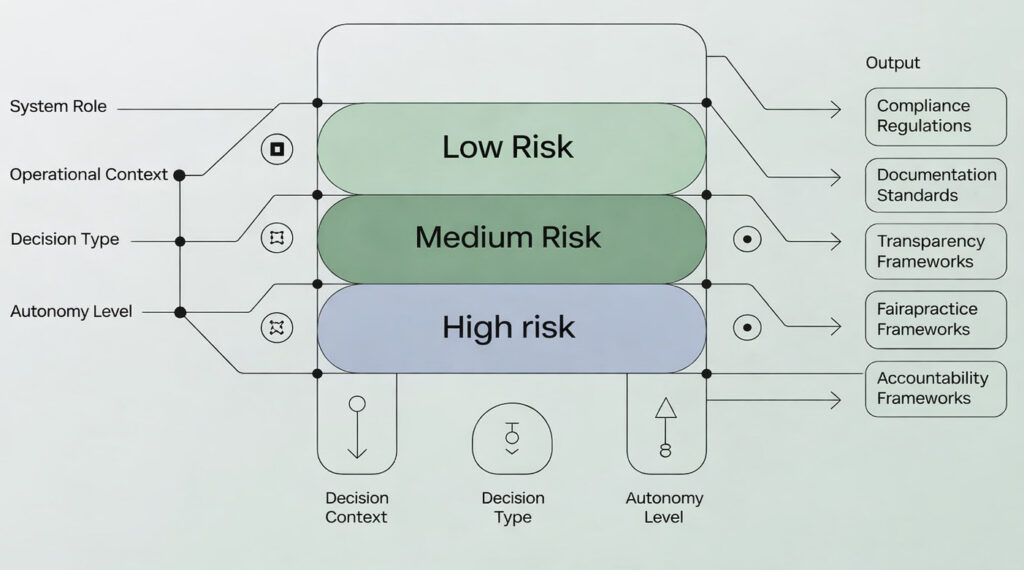

The EU AI Act introduces differentiated obligations based on the risk classification of AI systems. For organizations adopting a DORG, classification is not straightforward: it depends on the role assigned to the digital employee, the operational context, the type of decisions they are involved in, and the autonomy granted. A DORG supporting financial decisions in the credit sector has a radically different risk profile from one managing internal communications.

This competency provides the interpretive framework developed by DORG Society to apply the EU AI Act to the digital employee ecosystem: how to classify your DORG’s risk based on its assigned role, what obligations arise for the organization, how to structure compliance documentation, how the human oversight system and DORG telemetry meet the transparency and traceability requirements set forth by the regulation.

Main contents: AI Act risk classification applied to DORGs, obligations for limited-risk and high-risk systems, documentation and transparency requirements, role of the Human Oversight Supervisor in regulatory compliance, alignment between DORG Code of Conduct and EU AI Act requirements, regulatory updates and impact on the ecosystem.